OpenAI Codex has quietly crossed a line that most CIOs haven’t fully registered yet.

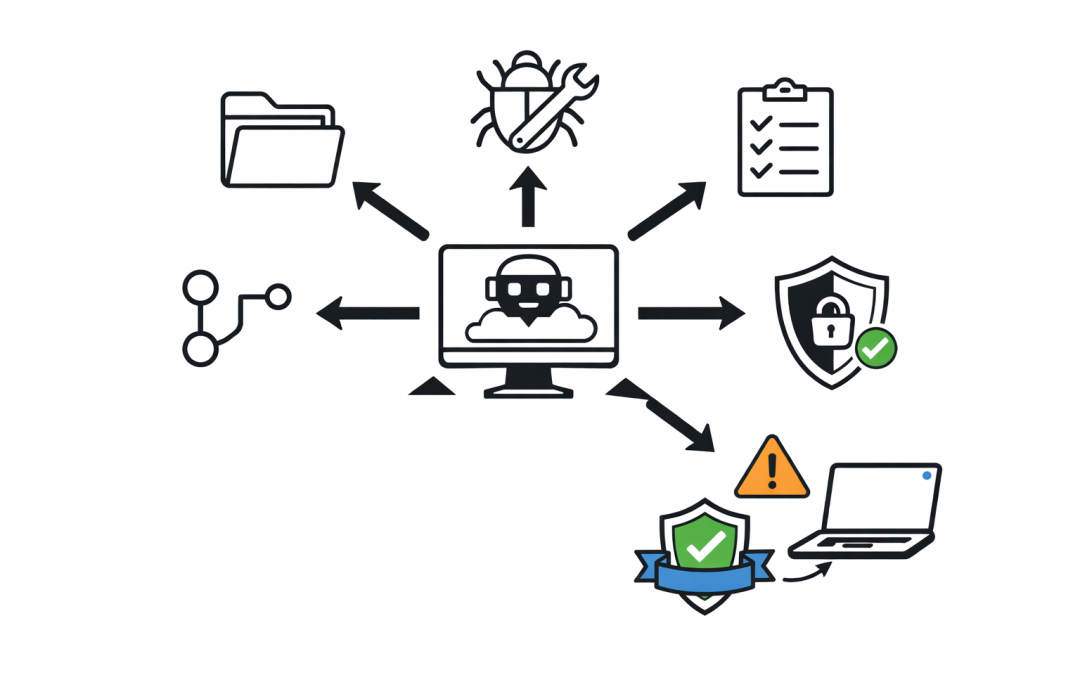

It’s no longer a code completion tool. It’s a cloud-based software engineering agent that can read a repository, run tests, fix bugs, write features, and open pull requests — all in parallel, all while the developer is doing something else.

For Australian organisations still treating AI coding tools as “nice-to-have” productivity boosters, this shift changes the conversation entirely.

What Codex Can Actually Do Now

Codex is powered by GPT-5.3-Codex, a model purpose-built for software engineering. It runs in the cloud as a delegated agent, not a sidekick in the IDE.

Developers can assign it tasks the way a manager assigns work to a contractor. Codex spins up an isolated sandbox, clones the repo, and gets to work. Each task typically takes between one and thirty minutes. The agent can run tests, iterate on failures, and produce a clean pull request with citations of every terminal command it ran.

Engineering teams at Cisco, Temporal, and Superhuman are already using it in production to refactor codebases, fix integration failures, and offload repetitive work that used to break engineering focus.

The capability boundary has moved. Tasks that previously required a mid-level engineer for half a day can now be completed by an agent in minutes.

Why This Matters for Australian CIOs

Australian organisations are operating in a tight labour market. Senior engineering talent is expensive and scarce, particularly outside Sydney and Melbourne. At the same time, boards are pushing for faster delivery and lower run costs.

Codex and tools like it directly address both pressures. But they also introduce risks that many governance frameworks weren’t designed to handle.

The core question is no longer “should we allow AI coding tools?” That ship has sailed. The real questions are:

- – Who is accountable when an AI agent merges code into production?

- – How is code generated by an agent reviewed, tested, and audited?

- – What data is leaving the organisation when a developer hands a task to Codex?

- – How does this align with Essential 8 and ACSC guidance on software supply chain integrity?

Most Australian IT teams do not have clear answers yet. That gap is where governance problems quietly accumulate.

The Shadow Adoption Problem

Individual developers are already using Codex, Claude Code, GitHub Copilot, and other agents — often on personal ChatGPT or Claude Pro accounts. They’re paying $20 a month out of pocket because it makes their jobs dramatically easier.

This is shadow AI adoption, and it’s happening in nearly every Australian organisation we encounter. The code these tools help produce is already in production systems. Source code, proprietary logic, and sometimes sensitive data have already passed through consumer AI accounts that weren’t provisioned or monitored by the business.

CIOs who haven’t explicitly addressed AI coding tools in policy are not actually preventing adoption. They’re just ensuring it happens on consumer accounts with no audit trail.

A Practical Response Framework

Organisations that are handling this well are not trying to block AI coding agents. They’re moving quickly to bring them inside the governance perimeter.

Provision enterprise accounts, not personal ones. ChatGPT Enterprise, Claude Enterprise, and GitHub Copilot Business all offer zero data retention for training and administrative visibility. This single decision converts shadow usage into auditable usage.

Define a code review standard for AI-generated output. Code produced by an agent should not receive lighter review than code produced by a human. In many cases, it should receive stricter review — particularly around security, error handling, and dependency introduction.

Classify what code can leave the environment. Not every repository should be accessible to a cloud-based agent. Legacy systems, regulated workloads, and IP-sensitive codebases need explicit allow-lists, not implicit access.

Update your Essential 8 posture. Software supply chain risk now includes AI-generated code. Patching, application control, and restricting administrative privileges all need to account for agents that can modify code and open pull requests autonomously.

Train the review layer, not just the coding layer. The bottleneck is shifting from writing code to reviewing code. Senior engineers need time, tooling, and organisational permission to reject AI-generated work that doesn’t meet the bar.

The Strategic Shift

The organisations that will pull ahead are not the ones with the most developers using Codex. They’re the ones who restructure how engineering work flows through the business.

Asynchronous delegation to agents is a different operating model from real-time pair programming. Tasks need to be well-scoped. Documentation becomes more valuable, because agents read it the same way humans do. Test coverage becomes a competitive asset, because agents iterate against tests until they pass.

This is less about the tool and more about the workflow. CIOs who treat Codex as “a faster autocomplete” will miss the actual leverage. Those who treat it as a delegation interface will restructure their engineering throughput.

What to Do This Quarter

A short, practical checklist for Australian CIOs and IT Directors:

- 1. Audit current AI coding tool usage across the engineering team — including personal accounts.

- 2. Stand up enterprise tenants for one or two AI coding platforms and migrate developers off consumer accounts.

- 3. Publish a short, clear policy on what can and cannot be sent to AI coding agents.

- 4. Update code review standards to explicitly cover AI-generated output.

- 5. Brief the security team on Essential 8 implications for AI-assisted development.

None of this requires a twelve-month transformation programme. It requires clarity, speed, and executive sponsorship.

The organisations that get this right in the next two quarters will compress delivery timelines in ways that are difficult to match later. The ones that delay will still be having policy debates while their competitors are shipping.

If you’d like a second opinion on how to structure AI coding tool governance for your organisation — including Essential 8 alignment, enterprise tenant setup, and developer enablement — our team at CPI Consulting works with Australian CIOs on exactly this. Get in touch for a no-obligation conversation.