Patch deployment failures are not supposed to become operational incidents. But that is exactly what many IT teams were forced to confront after Microsoft’s April 2026 Windows Server security updates triggered installation failures on some Windows Server 2025 systems and restart loops on some domain controllers.

For mid-market organisations, this matters well beyond the Microsoft ecosystem. The lesson is not simply that a vendor shipped a problematic update. The lesson is that patching without validation is now too risky for environments that depend on uptime, identity services, and predictable change windows.

What Happened

Microsoft released out-of-band updates on 19 April 2026 after confirming two significant issues introduced by the April Windows Server security updates.

First, a limited number of Windows Server 2025 devices failed when installing KB5082063. Second, some Windows Server domain controllers entered repeated restart loops after the April security update because the Local Security Authority Subsystem Service (LSASS) was crashing.

Microsoft’s emergency fixes included KB5091157 for Windows Server 2025, which addresses both the installation failure and the domain controller restart issue. Additional out-of-band updates were also released for Windows Server version 23H2, Windows Server 2022, Windows Server 2019, Windows Server 2016, and the Azure Edition hotpatch variants to address the restart-loop issue.

That sequence matters. A normal monthly security cycle became an unplanned remediation cycle within days. For businesses that treat patching as a routine checkbox, that is the kind of event that exposes whether their update process is actually controlled.

Why Mid-Market Organisations Should Pay Attention

Large enterprises can often absorb a bad patch with ringed deployments, dedicated test estates, change advisory discipline, and specialist recovery teams. Mid-market organisations usually do not have that luxury.

A 50 to 500 person organisation may have only a small infrastructure team managing servers, identity, endpoints, cloud services, and security operations at the same time. If a domain controller enters a reboot loop or a critical server update fails during a maintenance window, the impact is immediate: authentication disruption, delayed business operations, overtime for IT staff, and elevated business risk.

This is why patch validation cannot be treated as bureaucratic overhead. It is a business continuity control.

The Real Risk Is Process Failure

The headline issue is Microsoft’s patch. The deeper issue is the operating model around how patches are introduced into production.

Too many mid-market environments still rely on one of two fragile patterns. Either updates are deployed broadly because security teams want exposure reduced quickly, or they are delayed for too long because operations teams do not trust the release quality. Both models create avoidable risk.

The first model increases the chance that one bad update becomes an outage. The second leaves known vulnerabilities open longer than necessary. The answer is not slower patching or blind patching. It is disciplined patch validation.

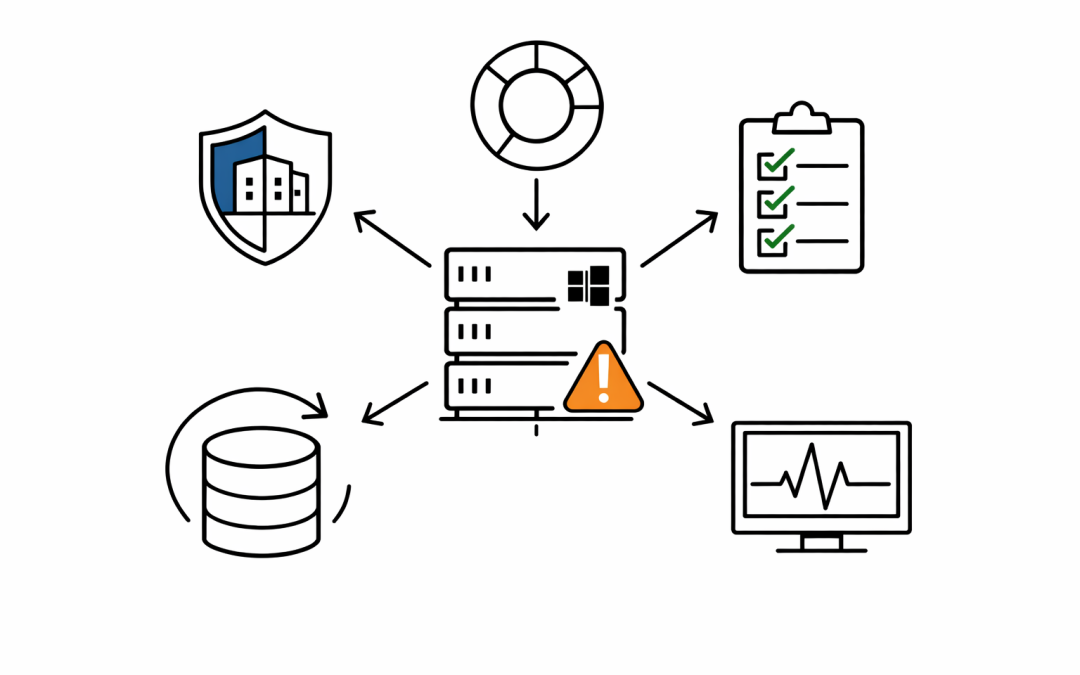

What Disciplined Patch Validation Looks Like

For most mid-market organisations, patch validation does not require an enterprise-scale lab. It requires a repeatable minimum standard.

Start with a small pilot ring that reflects production reality. That means at least one representative server for each critical workload class: domain services, line-of-business applications, file services, management infrastructure, and any server platforms with custom agents or security tooling.

Then validate the things that matter operationally, not just whether the server boots. Authentication, line-of-business application access, backup jobs, scheduled tasks, monitoring agents, EDR status, and remote administration paths should all be checked before a patch moves wider.

Organisations should also define rollback and break-glass steps before deployment starts. If a patch affects domain controllers or core infrastructure, the team needs a documented path for isolation, recovery, and stakeholder communication before the maintenance window opens, not after the issue appears.

Five Changes Worth Making Now

Create server deployment rings. Separate pilot, secondary, and production-critical infrastructure instead of pushing one server patch baseline everywhere at once.

Treat identity infrastructure as a special class. Domain controllers, federation services, certificate services, and privileged access platforms deserve slower, more deliberate validation than general-purpose servers.

Measure patch success, not just patch compliance. A dashboard that shows servers as “updated” is incomplete if the update introduced authentication failures, reboot loops, or application outages.

Document known-good validation checks. The same tests should run every month so the team is not improvising during each change window.

Tie patching to business impact. If a workload affects finance, operations, healthcare, legal systems, or customer-facing services, patch sequencing should reflect that risk.

The Australian Context

For Australian organisations, this issue aligns closely with Essential 8 maturity rather than sitting outside it. Patch applications and patch operating systems are obvious controls, but the more important question is whether those controls are executed in a way that improves resilience rather than just satisfying an audit line.

The ACSC’s guidance has consistently pushed organisations toward disciplined, risk-based uplift rather than superficial compliance. Mid-market businesses that interpret patching as “deploy everything immediately and hope for the best” are not operating with maturity. They are outsourcing operational risk to the monthly update cycle.

There is also a governance issue here for boards and leadership teams. If server uptime, identity availability, and cyber resilience are business priorities, patch validation needs to be recognised as a formal control with ownership, testing expectations, and reporting.

What Leaders Should Take From This

Microsoft’s emergency Windows Server updates are not just another admin headache. They are a reminder that security velocity without operational control is not maturity.

The organisations that manage patching well in 2026 will be the ones that can move quickly without creating self-inflicted outages. That means validating patches against critical services, using staged deployment rings, and treating infrastructure recovery planning as part of security operations rather than a separate discipline.

Our team works with mid-market Australian organisations to strengthen patch governance, reduce change-related risk, and align Microsoft security operations with practical business continuity requirements. If this incident exposed gaps in server validation, update rings, or recovery readiness, it may be time for a more structured patch management review.

CloudProInc is a Microsoft Partner and Wiz Security Integrator, working with Australian organisations on cloud, AI, and cybersecurity strategy.